The Problem We Solve

AI infrastructure costs are spiraling. GPU energy consumption is unsustainable. Inference latency limits real-time applications. Searching through databases and finding useful information has become a massive computational challenge, relying on costly, power-hungry CPU/GPU servers requiring highly specialized programming for deployment and maintenance.

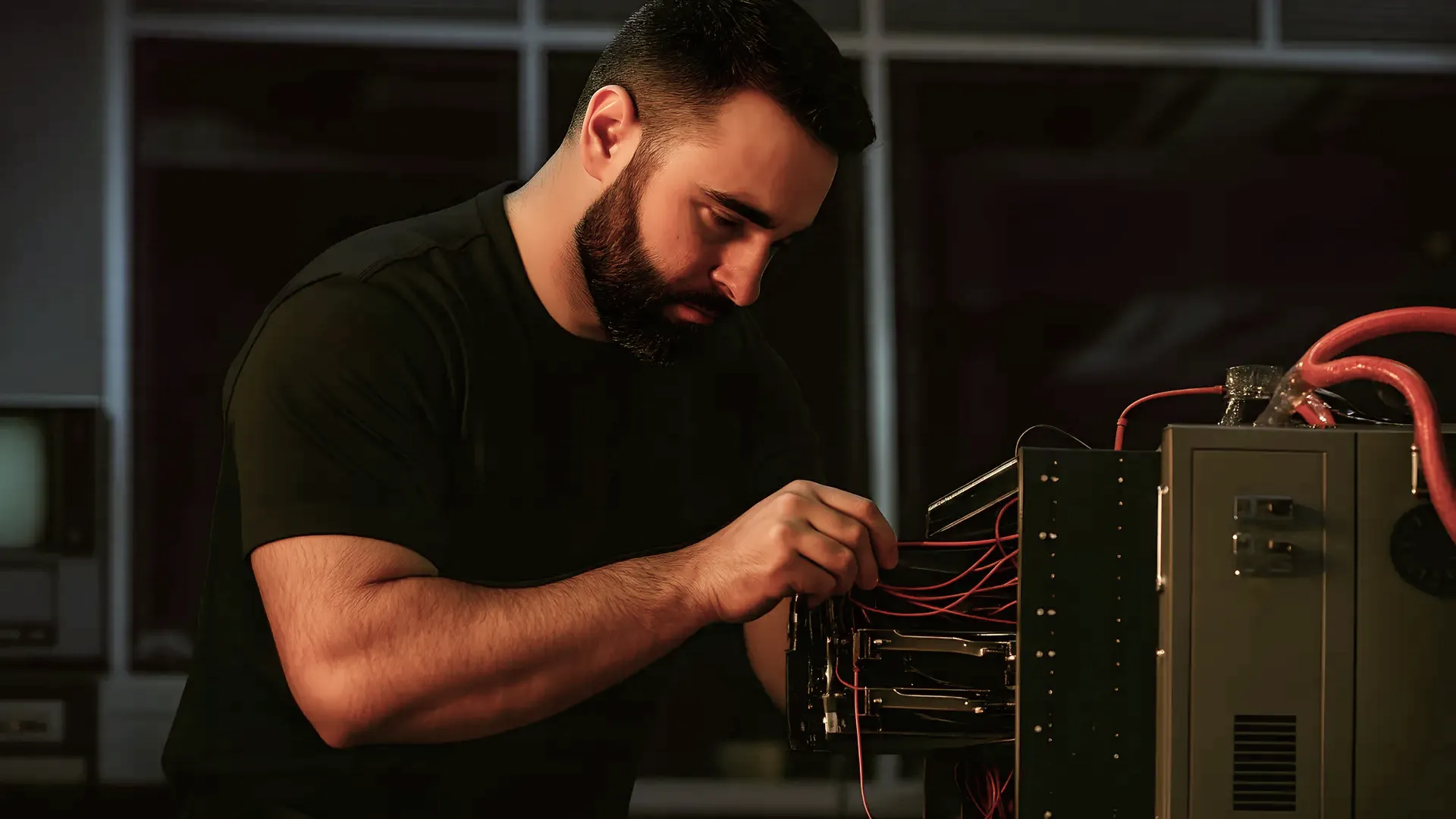

Silara addresses these challenges through our SUM neuromorphic architecture: hardware and operating system solutions that compute in parallel, learn in real time, deliver deterministic latency combined with powerful stochastic forecasting engines, and provide traceability for inference decision making.